Index Error Handling: A Comprehensive Guide to ArgumentOutOfRangeException

ArgumentOutOfRangeException: Handling Index Errors in Arrays and Collections

ArgumentOutOfRangeException is an exception that is commonly encountered in programming, particularly in languages like C# and .NET. The exception known as ArgumentOutOfRangeException is triggered when a method receives an argument that is neither null nor falls within the expected range of values. This particular exception type possesses the attributes ParamName and ActualValue, which aid in comprehending the underlying cause of the exception.

What are ParamName and ActualValue?

The ParamName attribute identifies the name of the parameter associated with the erroneous argument, while the ActualValue attribute pinpoints the flawed value, should one be present.

Where ArgumentOutOfRange is widely employed?

Typically, the occurrence of the ArgumentOutOfRangeException is attributable to developer oversight. If the argument’s value is sourced from a method call or user input before being passed to the method that generates the exception, it is advisable to perform argument validation prior to the method invocation.

This exception can occur in various situations, depending on the specific context in which it is used. Some common scenarios include:

- Array or Collection Index: When trying to retrieve an element from an array or collection using an index that exceeds the array or collection’s boundaries.

- String Manipulation: When working with strings, this exception may be thrown if an attempt is made to access a character at an index that does not exist within the string.

- Numeric Ranges: In mathematical or numerical operations, this exception may be raised if a number is outside the acceptable range for a given operation. For example, attempting to take the square root of a negative number may trigger this exception.

- Custom Validation: Developers can also throw ArgumentOutOfRangeException explicitly in their code when implementing custom validation logic for function or method parameters.

The ArgumentOutOfRangeException is widely employed by classes within the System.Collections namespace. A common scenario arises when your code attempts to remove an item at a specific index from a collection. If the collection is either empty or the specified index, as provided through the argument, is negative or exceeds the collection’s size, this exception is likely to ensue.

How Do Developers Handle ArgumentOutOfRangeException?

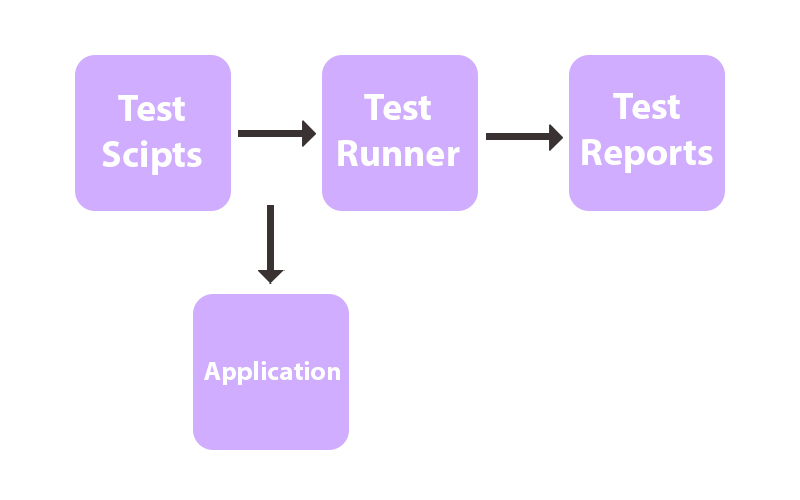

To handle this exception, developers can use try-catch blocks to catch and respond to it appropriately. When caught, the application can provide an error message or take corrective action, such as prompting the user for valid input or logging the issue for debugging purposes.

Here are examples of ArgumentOutOfRangeException:

using System;

using System.Collections.Generic;

class Program

{

static void Main(string[] args)

{

try

{

var nums = new List<int>();

int index = 1;

Console.WriteLine("Trying to remove number at index {0}", index);

nums.RemoveAt(index);

}

catch (ArgumentOutOfRangeException ex)

{

Console.WriteLine("There is a problem!");

Console.WriteLine(ex);

}

}

}

/* Output:

Trying to remove number at index 1

There is a problem!

System.ArgumentOutOfRangeException: Index was out of range. Must be non-negative and less than the size of the collection. (Parameter 'index')

at System.Collections.Generic.List`1.RemoveAt(Int32 index)

at Program.Main(String[] args) in \C#\ConsoleApp1\Program.cs:line 14 */

In order to preempt the exception, we can verify whether the Count property of the collection exceeds zero, and that the specified index for removal is likewise less than the value stored in Count. Only then should we proceed with the removal of a member from the collection. We shall modify the code statement within the try block as follows:

var nums = new List<int>() { 10, 11, 12, 13, 14 };

var index = 2;

Console.WriteLine("Trying to remove number at index {0}", index);

if (nums.Count > index && 0 < nums.Count)

{

nums.RemoveAt(index);

Console.WriteLine("Number at index {0} successfully removed", index);

}

/* Output:

Trying to remove number at index 2

Number at index 2 successfully removed

*/

In summary, ArgumentOutOfRangeException serves as a valuable exception for managing situations in which an argument’s value deviates from the anticipated range. It assumes a pivotal role in maintaining the strength and dependability of software by affording developers the capability to detect and address improper input in a graceful manner, thereby averting unforeseen system failures or erroneous operations.

Recent Comments